Istio vs. Linkerd: Service Mesh on Kubernetes

Table of contents

Both Istio and Linkerd are CNCF Graduated service meshes that provide automatic mTLS, traffic policy, and observability for Kubernetes workloads. Both run in sidecar mode for this comparison — a proxy container injected into every pod. The fundamental difference is the data plane: Istio uses Envoy, Linkerd uses its own Rust-based proxy (linkerd2-proxy). That choice drives the differences in overhead, extensibility, and egress control.

This post compares them on the dimensions that matter for a production deployment. For the broader question of Istio vs. Envoy Gateway (ingress-only), ambient mode, and managed cloud specifics (AKS, GKE, OVH MKS), see the companion post Istio vs. Envoy Gateway: Gateway API on Kubernetes.

TL;DR🔗

- Use Istio if you need advanced L7 policy, centralized egress control, or Envoy extensibility.

- Use Linkerd if you prioritize operational simplicity and low proxy overhead.

- Istio is broader and heavier; Linkerd is smaller and simpler.

- Both provide production-grade mTLS and observability for Kubernetes workloads.

Scope🔗

| Istio 1.29 | Linkerd 2.19 | |

|---|---|---|

| Data plane | Envoy | linkerd2-proxy (Rust) |

| Control plane | istiod | linkerd-control-plane |

| East-West mTLS | ✓ (Envoy sidecar) | ✓ (direct TLS via sidecar) |

| North-South (ingress) | ✓ (Istio Gateway) | ✓ (via separate ingress controller) |

| Ingress provisioning | built-in (istiod-managed Gateway pod) | external (separate controller required) |

| Egress Gateway | ✓ | ✗ |

| L7 policies (East-West) | ✓ (Envoy sidecar) | limited (HTTPRoute, AuthorizationPolicy) |

| Wasm / ext_proc extensions | ✓ | ✗ |

| CNCF status | Graduated | Graduated |

Architecture🔗

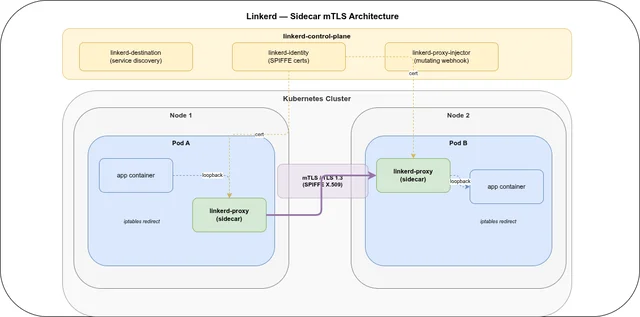

Istio (sidecar mode)🔗

In sidecar mode, Istio injects an Envoy proxy as a sidecar container into every pod via a mutating webhook. An istio-init init container sets up iptables rules that transparently redirect all inbound and outbound pod traffic through the local Envoy process. The application is unaware of the proxy and communicates in plaintext on the loopback.

istiod is the control plane: it acts as an xDS server, distributing routing configuration and traffic policies to all Envoy sidecars, and runs the certificate authority that issues SPIFFE X.509 credentials to each sidecar. L7 policies — header routing, retries, circuit breaking, JWT validation — are applied directly by the sidecar without any separate proxy.

Ingress is handled by a dedicated Envoy pod (Istio Gateway) managed by istiod, exposed via a LoadBalancer or NodePort service.

Istio also ships an ambient mode (sidecar-free, ztunnel DaemonSet) since 1.22 — that architecture is covered in the companion post. Sidecar mode is still fully supported and widely deployed; the two modes can coexist in the same cluster per namespace.

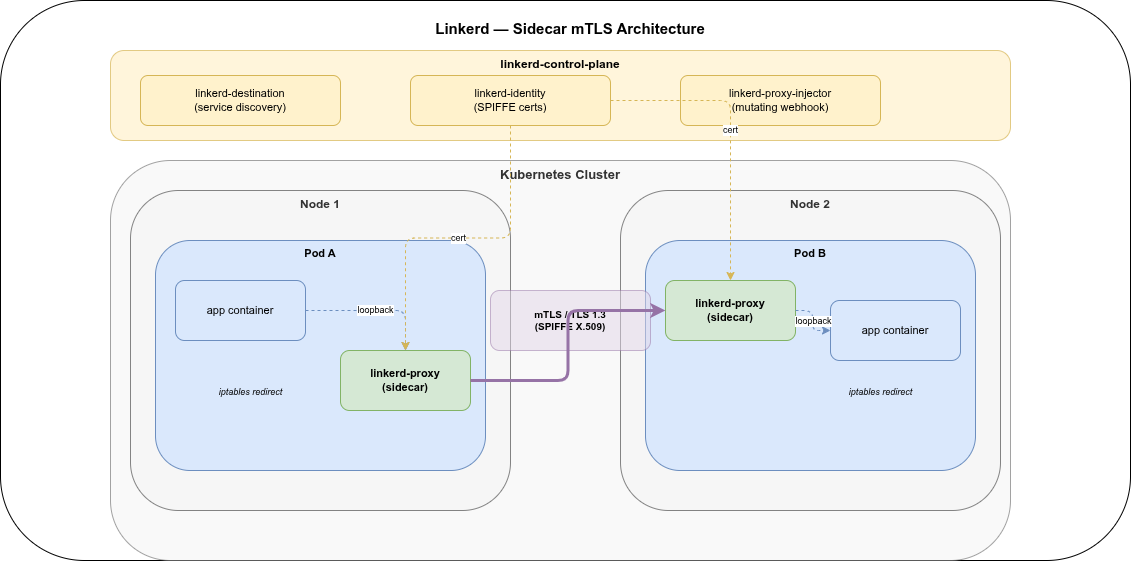

Linkerd (sidecar mode)🔗

Linkerd injects a lightweight sidecar (linkerd-proxy, written in Rust on Tokio) into every pod via a mutating webhook. All traffic in and out of the pod is transparently redirected through the proxy using iptables rules set up by an init container. The proxy handles mTLS negotiation, load balancing, and metrics collection.

The control plane has three small components:

linkerd-destination— service discovery and traffic policy distributionlinkerd-identity— issues SPIFFE X.509 certificates from the cluster trust anchorlinkerd-proxy-injector— mutating webhook that injects the sidecar

Ingress: Linkerd does not provision its own ingress pod. Instead, the pods of an existing ingress controller (Envoy Gateway, Nginx, Traefik, …) are annotated to participate in the mesh — the ingress proxy becomes just another meshed pod, and the ingress-to-backend leg is covered by mTLS automatically.

Ingress🔗

Istio provisions a dedicated Istio Gateway Envoy pod for each Gateway resource, managed entirely by istiod. The gateway pod has the Istio sidecar injected, so the gateway-to-backend leg is automatically covered by mTLS — no extra configuration needed. Certificate rotation, xDS configuration, and lifecycle management are all part of the Istio installation. One Helm chart, one upgrade path, one control plane.

Linkerd ships no ingress pod. You install, configure, and operate a separate ingress controller — Envoy Gateway, Nginx, Traefik, HAProxy, or any other. Linkerd’s proxy-injector can annotate the ingress controller’s pods so they participate in the mesh (ingress-to-backend mTLS works via the injected sidecar), but the controller’s lifecycle — installation, CRD management, upgrades, config — is completely decoupled from Linkerd. See the Using Ingress with Linkerd guide for controller-specific setup instructions.

| Istio | Linkerd | |

|---|---|---|

| Ingress pod provisioned by | istiod (automatic) | self-installed |

| Ingress-to-backend mTLS | ✓ (sidecar on Gateway pod) | ✓ (ingress pods meshed via annotation) |

| Ingress + mesh upgrade lifecycle | single (Istio) | two separate stacks |

| Ingress feature set | Envoy (Gateway API + VirtualService / EnvoyFilter) | depends on chosen controller |

| Flexibility in ingress choice | fixed (Envoy) | any controller |

Istio’s built-in ingress is the simpler operational model — no second stack to manage. The trade-off is that you are locked into Envoy as the ingress proxy. Linkerd’s decoupled model lets you keep an existing Nginx or Traefik installation, or combine with Envoy Gateway for its BackendTrafficPolicy and SecurityPolicy extensions, but you now maintain two independent stacks with separate release cycles, CRDs, and upgrade procedures.

mTLS and identity🔗

Both projects use SPIFFE X.509 SVIDs for workload identity and automatic certificate rotation. In sidecar mode the behavior is symmetric:

Istio — certificates are issued by istiod’s built-in CA, with identity derived from the Kubernetes Service Account of the pod. The Envoy sidecar enforces mTLS per meshed-pod pair. Pods without a sidecar communicate in plaintext — same as Linkerd. The PeerAuthentication CRD controls whether plaintext connections to a service are allowed (PERMISSIVE) or rejected (STRICT). AuthorizationPolicy controls which SPIFFE identities may connect.

Linkerd — certificates are issued by linkerd-identity from a trust anchor (a cluster-level CA certificate that operators bring or generate). mTLS is enforced per sidecar pair: a pod without an injected linkerd-proxy communicates in plaintext. The Server and AuthorizationPolicy CRDs control which SPIFFE identities are permitted to connect to a given service.

Both projects default to allowing plaintext from unmeshed pods to avoid breaking unmigrated workloads during rollout. Both enforce strict mTLS once all communicating pods are meshed.

Egress traffic🔗

Istio ships an Egress Gateway for centralized outbound control. When configured, pods route external traffic through the gateway pod (which is itself inside the mesh and therefore reachable via ztunnel mTLS):

Pod → Envoy sidecar ══ mTLS ══► Egress Gateway Pod → external serviceThe gateway enforces policies, produces a centralized audit log, and can originate TLS towards external services for HTTP, HTTPS, and plain TCP destinations (databases, etc.). External services are registered as ServiceEntry resources.

Linkerd has no egress gateway. Pods connect directly to external services. Linkerd can apply AuthorizationPolicy to outbound connections (allow/deny), but there is no centralized proxy, no forced TLS origination, and no audit log for external traffic. The gap is the same as for Cilium + Envoy Gateway: if centralized egress control is a requirement, Istio is the right choice.

L7 traffic policy🔗

| Feature | Istio | Linkerd |

|---|---|---|

| HTTPRoute (Gateway API) | ✓ | ✓ |

| Header-based routing (East-West) | ✓ (Waypoint Proxy) | ✓ (HTTPRoute) |

| Retries / timeouts (East-West) | ✓ | ✓ |

| Circuit breaking (East-West) | ✓ | limited |

| Traffic shifting / canary | ✓ | ✓ |

| OIDC / JWT at proxy | ✓ (SecurityPolicy / EnvoyFilter) | ✗ |

| Rate limiting at proxy | ✓ (EnvoyFilter / Wasm) | ✗ |

| Wasm filter / ext_proc | ✓ | ✗ |

Linkerd covers the common day-2 scenarios — canary deployments, retries, timeouts, header-based routing — via the HTTPRoute Gateway API resource. What it does not support is Envoy-based extensions: no Wasm filters, no ext_proc, no OIDC or rate limiting at the proxy level. For those features a separate ingress layer (Envoy Gateway or the cloud-native equivalent) is needed regardless, so the gap only matters if you want those policies to apply to East-West (service-to-service) traffic.

Resource overhead🔗

| Component | Istio 1.29 (sidecar) | Linkerd 2.19 |

|---|---|---|

| Control plane | ~500 MB (istiod) | ~50–100 MB (3 components) |

| Per-pod proxy | ~50 MB (Envoy sidecar) | ~10–30 MB (linkerd2-proxy) |

| Gateway / ingress pod | ~100 MB (Istio Gateway) | n/a (uses separate ingress) |

The dominant overhead difference is per-pod. Envoy is a general-purpose extensible proxy (C++), which gives it a larger baseline footprint. linkerd2-proxy is purpose-built in Rust for service mesh use only, with careful avoidance of heap allocation in the hot path. In a cluster with 100 pods: roughly 5 GB for Istio sidecars vs. ~2 GB for Linkerd proxies. Latency overhead is sub-millisecond for both; Linkerd’s Rust proxy tends to add slightly less.

The Istio control plane (istiod) is substantially heavier than Linkerd’s because it ships a full xDS server, a certificate authority, and Envoy proxy distribution infrastructure. Linkerd’s three small components have a much lower footprint.

Observability🔗

Istio emits detailed per-request metrics via Envoy’s stats subsystem (Prometheus format), supports distributed tracing via Envoy’s OpenTelemetry integration, and ships Kiali for service topology visualization. The out-of-the-box experience requires assembling a Prometheus + Grafana + Kiali + tracing stack.

Linkerd includes linkerd-viz, a lightweight add-on that deploys Prometheus + Grafana and a built-in web dashboard. The dashboard surfaces “golden metrics” — success rate, requests per second, and P99 latency — per service, route, and pod, without any additional configuration. Distributed tracing is available via OpenTelemetry. The linkerd top and linkerd viz tap CLI commands provide live per-request visibility. The observability story is simpler out of the box.

Multi-cluster🔗

| Istio | Linkerd | |

|---|---|---|

| Model | Primary-Remote or Multi-Primary | Linkerd Service Mirror |

| CNI requirement | none | none |

| Service discovery | istiod federated, shared trust domain | mirror controller copies remote Services locally |

| Cross-cluster mTLS | ✓ (shared trust domain) | ✓ (shared trust anchor) |

| East-West gateway | ✓ (dedicated gateway pod) | ✓ (link gateway pod) |

Linkerd’s Service Mirror model is conceptually simple: a controller on each cluster watches the remote cluster’s Services via the Kubernetes API and creates local mirror Services for them. DNS on cluster A resolves svc.svc-namespace.svc.cluster.local to a local mirror that forwards traffic to the remote cluster’s gateway. mTLS is enforced end-to-end because both clusters share the same trust anchor.

Istio’s multi-cluster models are more flexible (Primary-Remote for shared istiod, Multi-Primary for independent control planes) but also more complex to set up. The advantage is that Istio multi-cluster is CNI-agnostic — it works across AKS, GKE, and OVH MKS without requiring the same CNI on all clusters.

Compatibility matrices🔗

Istio 1.29🔗

| Istio | Release | EOL (approx.) | Kubernetes |

|---|---|---|---|

| 1.29 | 2026-02-16 | ~2026-08 | 1.26–1.35 |

| 1.28 | 2025-11-05 | ~2026-05 | 1.25–1.29 |

See istio.io/latest/docs/releases/supported-releases/ for the current list.

Linkerd 2.19🔗

| Linkerd | Kubernetes | Release |

|---|---|---|

| 2.19 | 1.29–1.33 | ~early 2026 |

Verify the exact supported Kubernetes range at linkerd.io/releases/.

Linkerd ships two release tracks: stable releases (major/minor, long-lived) and edge releases (weekly, rolling). Stable releases receive security patches; edge releases provide early access to new features. Only the latest stable release is supported at any given time.

Maturity🔗

| Istio | Linkerd | |

|---|---|---|

| First release | 2017 | 2016 |

| Current stable | 1.29 | 2.19 |

| CNCF status | Graduated | Graduated |

| Primary backers | Google, Red Hat, IBM | Buoyant |

| Data plane | Envoy | linkerd2-proxy (Rust) |

| Production-ready | Yes | Yes |

Both projects are CNCF Graduated and production-ready. Istio has a larger ecosystem, more third-party integrations, and a broader feature set. Linkerd is the older project (predating Istio by a year) and has a reputation for operational simplicity and low overhead.

When to use which🔗

Use Istio when:

- You need an Egress Gateway for centralized outbound control: audit logging, policy enforcement, or TLS origination for external services including plain TCP protocols.

- Rich L7 extensions at the mesh layer are required: Wasm filters,

ext_proc, OIDC, rate limiting between services. - You are already using Envoy Gateway for ingress (Istio and Envoy Gateway share the same data plane; the operational familiarity and tooling overlap is real).

- Multi-cloud or multi-cluster (AKS ↔ GKE) is in scope — Istio multi-cluster is CNI-agnostic.

- You want a future migration path to ambient mode: sidecar → ambient can be switched per namespace with the same istiod, no full re-installation required.

Use Linkerd when:

- Minimal proxy overhead per pod is the top priority. The Rust proxy adds less than 30 MB and sub-millisecond latency per pod — significantly less than Envoy.

- Operational simplicity is valued over feature breadth. Linkerd’s surface area is smaller and the learning curve is shorter.

- Built-in golden metrics without assembling an observability stack are attractive.

- You do not need an Egress Gateway.

- You already operate a separate ingress controller and want the mesh to complement it without replacing it — or you want to choose your ingress independently of the mesh.

FAQ🔗

Is Linkerd faster than Istio?🔗

Generally yes. Linkerd’s Rust-based linkerd2-proxy has lower memory overhead (~10–30 MB per pod vs. ~50 MB for Envoy) and slightly lower latency than Envoy-based Istio sidecars. For most workloads the difference is sub-millisecond, but at scale it adds up.

Does Linkerd support egress gateways?🔗

No. Istio provides a dedicated Egress Gateway that routes outbound traffic through a centralized proxy for policy enforcement, audit logging, and TLS origination. Linkerd has no equivalent — pods connect directly to external services.

Is Istio more complex than Linkerd?🔗

Usually yes. Istio offers more L7 functionality and extensibility (Wasm, ext_proc, OIDC, Egress Gateway, ambient mode), but requires operating a larger control plane, the Envoy ecosystem, and — in sidecar mode — a heavier per-pod proxy. Linkerd’s smaller surface area translates to a shorter learning curve and simpler day-2 operations.

How do Istio and Linkerd compare for observability?🔗

Linkerd ships linkerd-viz as a built-in add-on: Prometheus, Grafana, and a web dashboard with golden metrics (success rate, requests/sec, P99 latency) per service, route, and pod — no extra configuration. Istio emits detailed Envoy stats in Prometheus format but requires assembling the observability stack separately (Prometheus, Grafana, Kiali for topology, a tracing backend). Istio’s telemetry is more granular; Linkerd’s is simpler to get running.

Does Linkerd include a dashboard?🔗

Yes. linkerd-viz includes a Grafana dashboard and a lightweight built-in web UI (linkerd viz dashboard) that shows live golden metrics per service. Istio uses Kiali for topology visualization, which is a separate installation.

Do both automatically encrypt pod-to-pod traffic with mTLS?🔗

Yes, but only for meshed pods — pods that have the sidecar injected. A pod without a sidecar communicates in plaintext with its peers. Both projects default to PERMISSIVE / allow-plaintext mode during rollout so unmigrated workloads are not immediately broken. Once all communicating pods are meshed, strict mTLS can be enforced cluster-wide.

How does workload identity work in Linkerd vs. Istio?🔗

Both use SPIFFE X.509 SVIDs derived from the Kubernetes Service Account. The issuing CA differs: Istio uses istiod’s built-in CA (or an external CA plugged in via cert-manager); Linkerd uses linkerd-identity, which requires operators to provide a trust anchor certificate at installation time. Both rotate certificates automatically with short TTLs.

Can Istio and Linkerd span multiple Kubernetes clusters?🔗

Yes, both support multi-cluster deployments. Istio offers Primary-Remote (shared istiod) and Multi-Primary (independent control planes) models and is CNI-agnostic — it works across AKS, GKE, and OVH MKS. Linkerd uses the Service Mirror model: a controller mirrors remote Services locally so DNS resolution and mTLS work transparently across clusters. Both require a shared trust anchor / trust domain and a cross-cluster network path (VPN or interconnect).

Which ingress controllers work with Linkerd?🔗

Any controller whose pods can receive the linkerd.io/inject: enabled annotation: Envoy Gateway, Nginx, Traefik, HAProxy Ingress, Kong, and others. Once annotated, the ingress controller’s pod participates in the mesh and the ingress-to-backend leg is covered by mTLS. See the Using Ingress with Linkerd guide for controller-specific configuration notes.

Do both support the Kubernetes Gateway API?🔗

Yes. Both implement the Gateway API (HTTPRoute, GRPCRoute, TCPRoute, TLSRoute). Istio’s support is more comprehensive — it also exposes its own extension CRDs (VirtualService, DestinationRule, EnvoyFilter) alongside Gateway API resources. Linkerd’s Gateway API support covers the common routing use cases (traffic splitting, header routing, retries, timeouts) but has no Envoy-based extension layer.